Quick Tip – There’s still time for students to enter our essay and video contests! Deadline is March 13th! (Note that students who entered the AI Challenge are not eligible for the essay contest, but may enter the video contest.)

When we launched our recent AI Challenge, we wanted to see how students would use artificial intelligence to explore complex civic and economic questions.

The contest was voluntary.

Some teachers encouraged participation. Some may have required it. But ultimately, students chose to enter.

There was also real incentive.

A total of $2,750 in prize money was on the line — including $1,000 for first place, $500 for second, $250 for third, and $100 for ten additional finalists.

Subscribe Now to Stay in the Loop

Stay updated on new videos, fresh resources, and student contest announcements.

In other words: incentives mattered.

And yet:

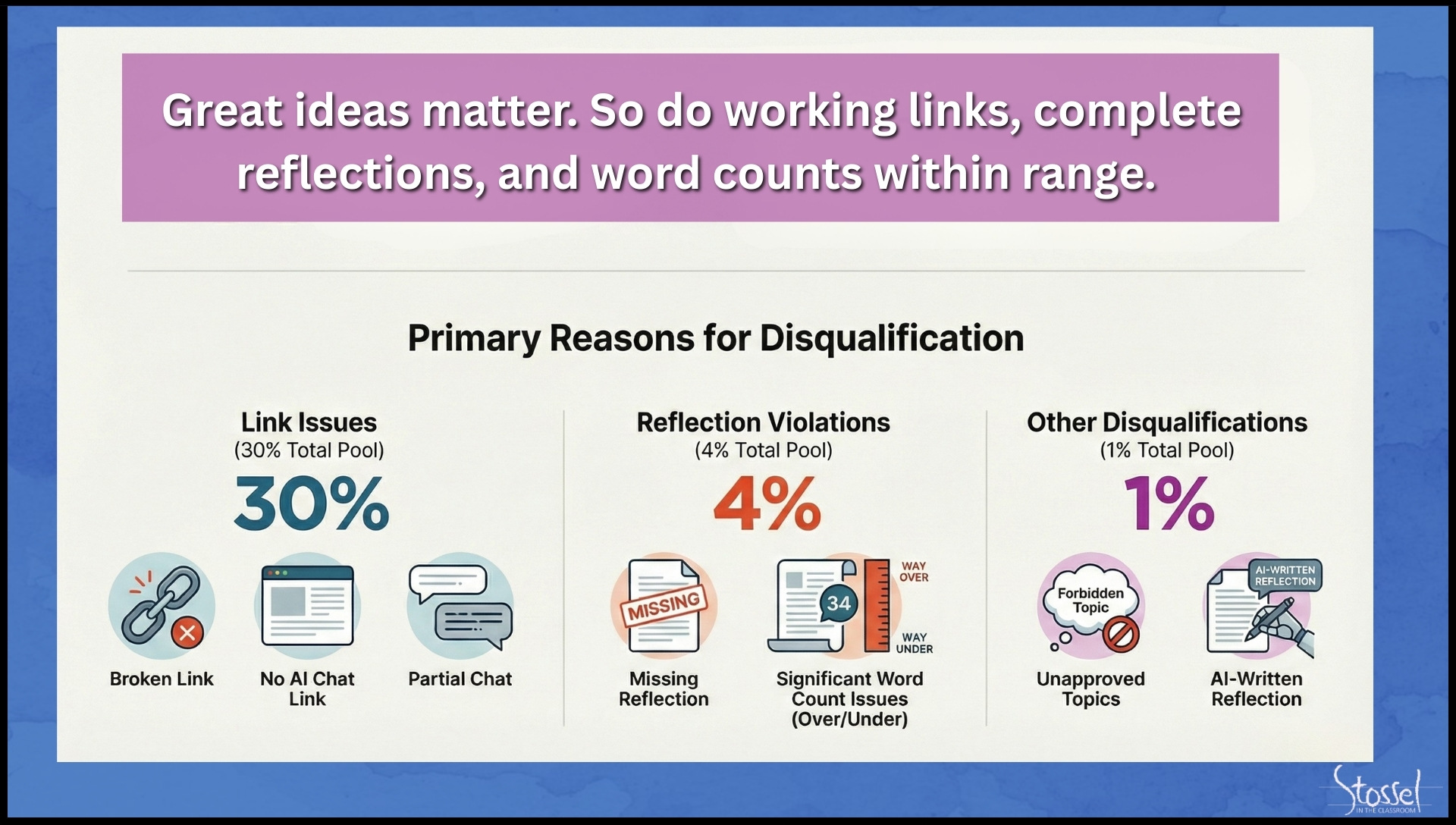

Thirty-five percent of submissions were disqualified.

Thirty percent were disqualified because students failed to submit a working transcript link.

Four percent missed the required 500–750 word count.

One percent fell into other preventable categories.

More than one in three entries failed — not because of weak ideas, but because students did not follow directions.

Students were required to:

- Submit a working link to their AI chat

- Ensure the link opened in a private/incognito window

- Write a 500–750 word reflection (not an essay!)

- Follow formatting and submission guidelines

We explicitly told students to test their links before submitting.

Many did not.

Some links led only to the ChatGPT homepage.

Some produced 404 errors.

Some had sharing permissions turned off.

One student submitted a “reflection” that read in its entirety:

“,My reflection on this is that it was not easy enough,.” (And yes, that’s exactly how it was punctuated.)

That was the full 500–750 word submission.

This isn’t about embarrassment.

It’s about preparation. (Or rather, the lack thereof.)

And AI is exposing it.

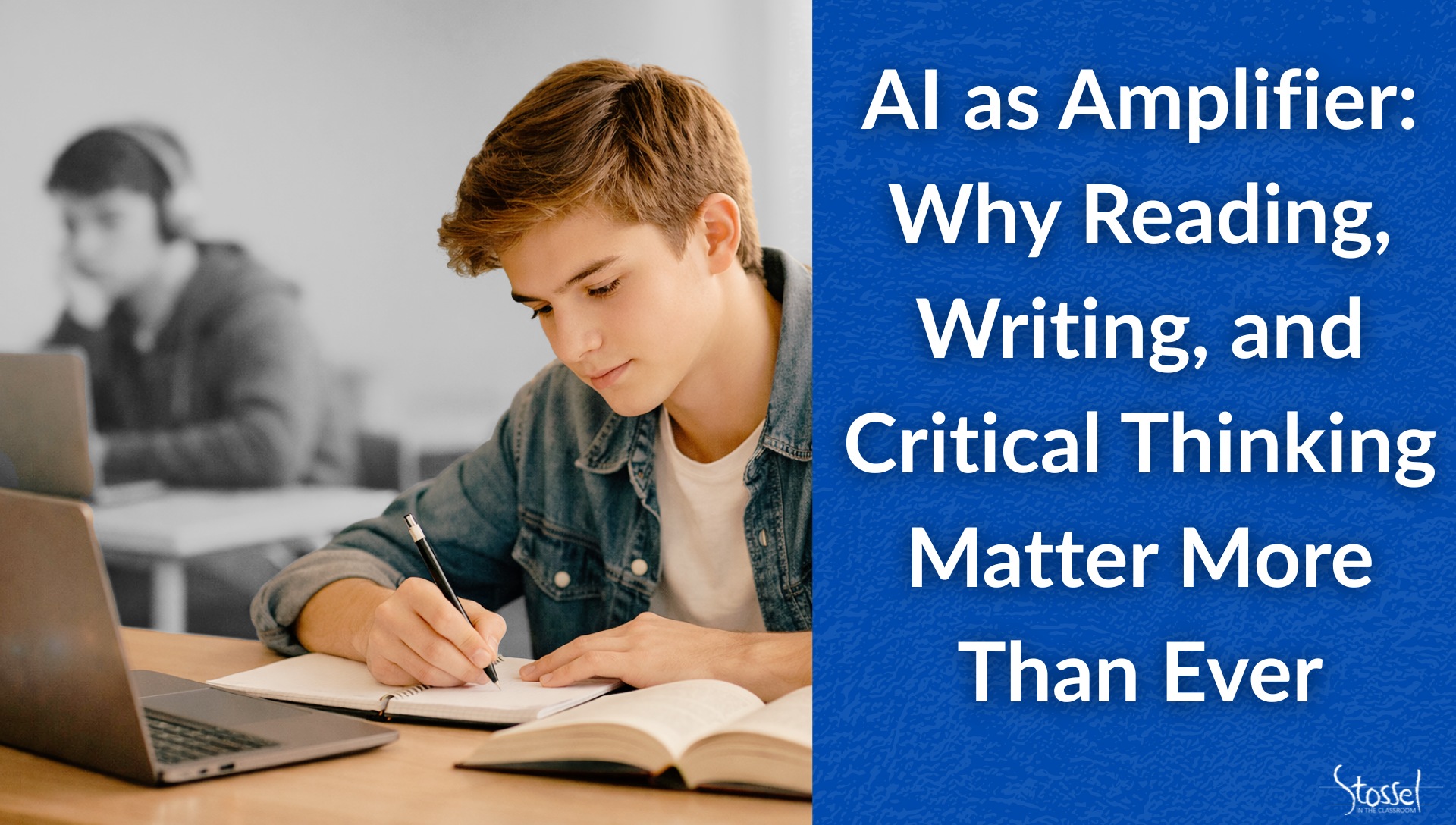

AI Is an Amplifier

There is a popular narrative that AI will level the educational playing field. And it does have amazing potential in education, under the right conditions and guidance.

AI can summarize readings.

AI can draft essays.

AI can generate arguments on both sides of an issue.

All true.

But our contest revealed something deeper:

AI amplifies habits.

Students who read carefully, followed instructions, refined their prompts, challenged the AI, and reflected thoughtfully produced impressive work. Some changed their minds. Some became more nuanced. One student wrote:

“I came in ready to debate the issue of term limits. I am going out honestly unsure—and that seems to be a valid feeling.”

That is not weakness.

That is intellectual growth.

Other students, however, labeled AI responses as “biased” simply because the evidence did not support their initial position. In several essays on tariffs, students claimed the AI was slanted because it described tariffs as a blunt instrument that should be used cautiously. But distinguishing between bias and the weight of economic evidence is itself a critical thinking skill.

AI didn’t create that misunderstanding.

It revealed it.

The Divide Isn’t Intelligence

The gap we are beginning to see isn’t about intelligence.

It’s about discipline.

It’s about whether a student is willing to:

- Read directions carefully

- Follow multi-step instructions

- Revise and iterate

- Reflect meaningfully

- Evaluate the quality of AI output

AI can read for a student.

AI can write for a student.

But AI can’t judge for a student.

If a student doesn’t have strong reading comprehension, clear writing skills, and the ability to weigh evidence, there’s no reliable way to know whether the AI’s output is insightful or shallow, balanced or misleading.

In short:

Students who already know how to think will use AI to think better.

Students who do not will use AI to avoid thinking.

That gap will widen.

Three Words That Matter

Through this challenge, three words kept resurfacing:

Agency.

Students must understand that they are directing the AI. The strongest submissions showed students actively steering the conversation, not passively accepting the first response. Some students did research before even approaching the AI, so they would knowledge of their own to compare to what the AI gave them. They took ownership of the process.

Iteration.

The best work did not come from a single prompt. It came from follow-up questions, counterarguments, refinements, and intellectual persistence.

Validation.

Students must evaluate whether AI output is accurate, balanced, and supported. That requires reading beyond the screen, checking sources, and understanding trade-offs.

These are not “tech skills.”

They’re thinking skills.

AI simply exposes whether students have them.

Why This ALL Matters — and Why Parents Matter, Too

This contest was voluntary.

There was meaningful prize money involved.

The instructions were clear.

And still, more than one-third of entries failed at the most basic level: following directions.

If students struggle with multi-step instructions in a voluntary academic contest with financial incentives, what happens when AI tools are embedded into workplace tasks, college assignments, or civic decisions?

Teachers cannot solve this alone.

Parents — whether homeschooling or partnering with trad schools — play a critical role in reinforcing these habits:

- You are personally responsible for your work.

- Read carefully.

- Follow directions fully.

- Finish what you start.

- Revise your work.

- Defend your reasoning.

In an AI world, these habits aren’t optional.

They’re the difference between using technology wisely and being misled by it.

The Bottom Line

Artificial intelligence isn’t a shortcut around learning.

It’s a stress test for it.

Thirty-five percent of voluntary, incentivized submissions failed because students did not follow instructions.

That shouldn’t cause panic.

But it should prompt urgency.

The brave new world will not be defined by who has access to AI.

It will be defined by who can read carefully, write clearly, think critically, and judge wisely.

Those skills have always mattered.

Now they are indispensable.

7 Practical Ways to Strengthen Thinking in an AI World

- Institute Daily Silent Reading — On Paper.

Set aside 15–20 minutes each day for uninterrupted reading, preferably from physical books. Strong readers become strong thinkers, and deep reading builds attention, vocabulary, and judgment in ways scrolling never will. Parents, this is for at home as well as at school. In fact, it’s probably even more important at home. YOU need to model reading as well. Everyone put down the screens and pick up a book. At home, 30 minutes should be the minimum goal. - Require Students to Explain Their Thinking Out Loud.

After completing an assignment, ask: “Why did you choose that?” or “How do you know this is accurate?” Verbal reasoning strengthens clarity and exposes weak understanding quickly. - Build in a “Validation Step.”

Whenever AI is used, require students to verify at least one claim independently. Make checking sources and evaluating accuracy a routine, not an optional add-on. - Make Directions a Graded Skill.

Occasionally assess only whether students followed instructions precisely. Attention to detail is not busywork — it is professional preparation. - Reward Iteration, Not Just Polish.

Ask students to submit drafts or show how they refined a prompt. Emphasize that strong work rarely comes from the first attempt. - Practice Writing Without AI.

Continue requiring handwritten responses or timed writing exercises. Students need to be able to organize and express ideas independently before they can effectively evaluate machine-generated writing. - Create Productive Struggle.

Resist the urge to immediately rescue students from difficulty. Wrestling with ideas — even sitting in uncertainty — builds the intellectual muscle AI cannot supply.

Download a PDF version of this list here.